Spyware Goes Retail. Pyongyang Infiltrates the Fortune 500. Privacy Dies on Schedule.

IN THIS ISSUE:

CEO's Perspective

Strategic outlook from Cambrian leadership

I spent most of this week in the kind of quiet, slow-burning frustration that takes hold when you realize a significant problem was entirely avoidable. We already deal with enough new threats. We need to make sure we aren’t fooled by known issues or fail to adapt to existing conditions as they evolve. Many recent challenges felt all too preventable.

The verification layer has collapsed: Nearly every Fortune 500 CISO surveyed admitted to unknowingly hiring at least one North Korean operative. The largest, best-resourced companies in the world do not have the gates to keep from employing North Korean agents. Our hiring processes, our background checks, and our identity verification systems were built for a world that no longer exists. Worse, our mobile devices might have been lcompromised by an exploit now shared between Russian intelligence, a Turkish surveillance vendor, and a financially motivated criminal crew targeting users across the Gulf and Southeast Asia. All the while, Meta's encryption never really existed for most users, and Brazil declared cloud sovereignty after Microsoft's own lawyers could not guarantee that Brazilian data stays out of American hands. As depressing as these examples are, we have to name and understand them if we hope to fix the broken verification layer across our high-tech systems. To restore trust, organizations need to audit the mechanisms that establish and preserve it.

The competitive dynamic is eating itself: DeepMind CEO Demis Hassabis has spent most of his career trying to build AI responsibly. He attempted three distinct approaches to keeping development safe, and he watched each one fail because the competitive structure of the industry removed every option for the sake of speed. I’m a growth-minded entrepreneur and believe in AI’s potential to drive a better future, but this heedless drive forward is more alarming than any individual product decision. When our future depends on one person or at best a few people’s morality, we have a critically fragile system by definition. We have not figured out how to assure privacy and criminal detection and enforcement, and we’re not even trying to do so in the US. Every actor in this issue is behaving rationally within their own system, but by doing so they quietly degrade the system that everyone shares. It is a structural trap and a leadership failure to not consider and fix the system as a whole. Structural traps do not resolve themselves. If this system blows, we can never say we didn’t know. No, we had clear visibility and lacked the courage to act.

National governance is being abandoned: The Trump AI framework says almost nothing about frontier model safety. The EU AI Act governs existing applications, but not the capabilities that Hassabis and AI pioneer Geoffrey Hinton are warning about. China is accelerating.The US, minus California is AWOL on the national and global governance stages The executives reading this newsletter are the leaders who can design the governance layer that governments have abdicated. You do not need to wait for permission. When Meta’s autonomous AI triggered a serious data breach without human authorization this month, we got an early preview of what can happen when deployment outpaces governance. Build your internal frameworks now, while the architecture is still yours to design and not hijacked by agents. For agile, clear-eyed organizations, this is the competitive opening, not just risk management. Governance builds trust, trust drives credibility and intimacy, and both of those demonstrably drive higher returns.

The world does not lack for people who understand what is happening. It is short on people willing to act before circumstances force them to.

-Olaf

On the Radar

The signals affecting the GeoTech landscape this week

DarkSword: The Exploit Kit That Broke the Spyware Business Model

A single iOS exploit chain is now shared among Russian spies, a Turkish surveillance vendor, and financially motivated criminals targeting users across the Gulf and Southeast Asia. Up to 270 million iPhones are vulnerable. The commercial spyware market has become a secondary arms bazaar.

BRIEFING: On March 19, Google’s Threat Intelligence Group, mobile security firm iVerify, and Lookout published coordinated research on DarkSword, an exploit chain that uses six vulnerabilities to fully compromise iPhones running iOS versions 18.4 through 18.7. The chain operates entirely in JavaScript, requiring only that a victim click a link in Safari. Within seconds, attackers gain kernel-level control and exfiltrate credentials, messages, location data, crypto wallet keys, and browsing history before cleaning up and exiting the device.

Google attributed DarkSword campaigns to at least three distinct threat actors. UNC6353, a suspected Russian espionage group, deployed it via compromised Ukrainian government websites to harvest intelligence from Ukrainian targets. PARS Defense, a Turkish commercial surveillance vendor, used it against users in Turkey and Malaysia on behalf of paying customers. A third cluster, tracked as UNC6748, targeted Saudi Arabian iPhone users through a fraudulent Snapchat lookalike site, with indicators pointing toward financial rather than intelligence objectives.

iVerify estimated that roughly 270 million iPhones remain vulnerable to the exploit chain. Lookout found that both DarkSword and the related Coruna exploit kit discovered two weeks earlier showed signs of code expansion using large language models. Apple has patched all six vulnerabilities in iOS 26.3, but older devices that cannot update remain exposed.

SO WHAT FOR EXECUTIVES: The path from state-grade exploit to criminal marketplace took months, not years. LLM-assisted code customization is accelerating that timeline further. In addition to mandating current iOS and Android versions, treating mobile device compromise as a tier-one risk, and actively searching for related threats, executives should invest in mobile threat defense platforms that detect exploit-chain behavior at the device level, establish threat intelligence sharing agreements with industry peers, and treat the growing secondary exploit market as a supply chain risk requiring active monitoring. For companies with staff in conflict zones or the Middle East, Southeast Asia, or Eastern Europe, the window between public disclosure and criminal exploitation of a vulnerability is now measured in days.

Pyongyang’s Shadow Workforce: 100,000 Fake Employees Funding Missiles

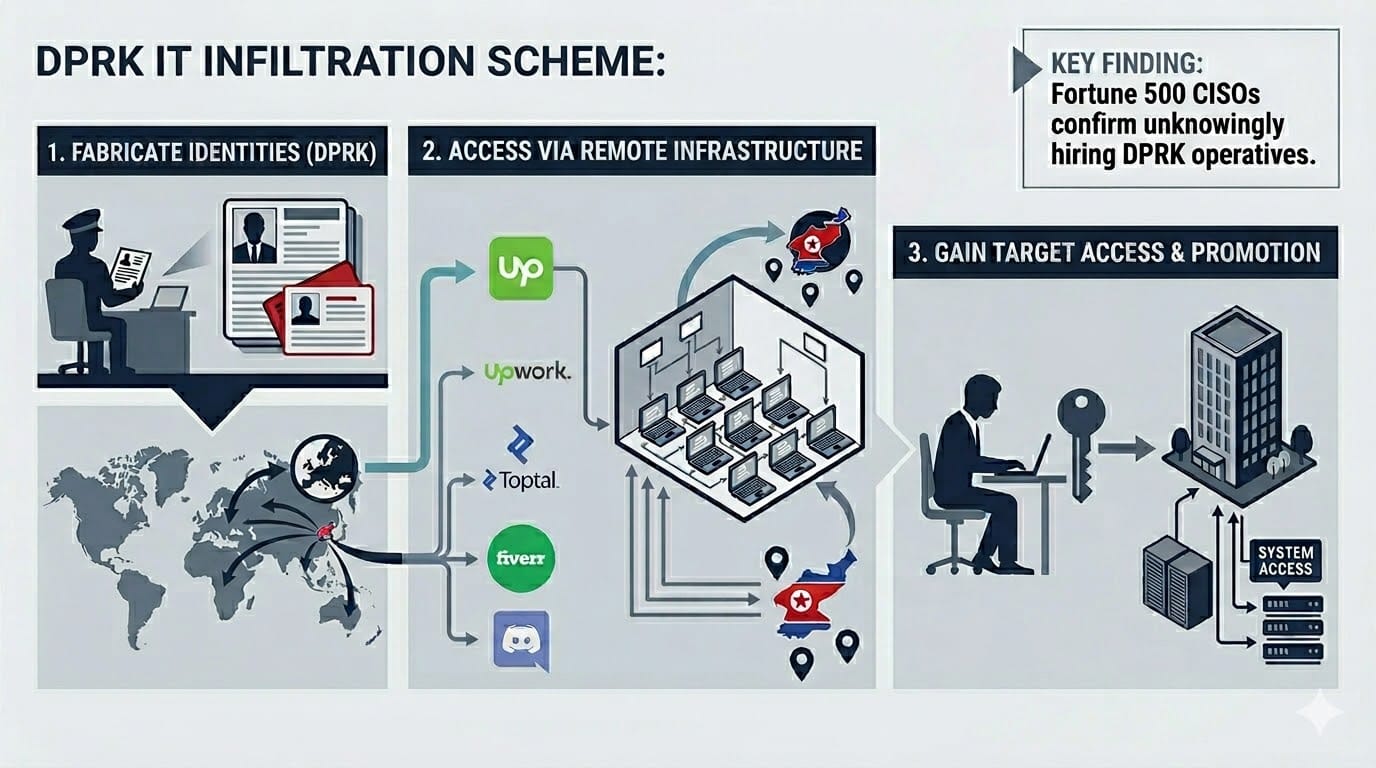

A joint Flare and IBM X-Force report revealed that more than 100,000 North Korean IT workers using stolen identities generate $500 million annually for the regime. Nearly every Fortune 500 CISO surveyed admitted to unknowingly hiring at least one.

BRIEFING: On March 18, cybersecurity firms Flare and IBM X-Force published a joint investigation detailing how the Democratic People’s Republic of Korea (DPRK) deploys thousands of skilled IT professionals to infiltrate companies across North America and Western Europe using stolen and fabricated American identities. A United Nations report estimated the operation generates approximately $500 million annually, with individual workers earning up to $300,000 per year. The U.S. government estimates more than 100,000 North Korean workers are spread across 40 countries.

The operation runs as a structured enterprise. Recruiters screen candidates, facilitators manage placement using fake identities, and brokers in Western countries operate “laptop farms” where multiple devices route remote access back to DPRK operatives. While workers are deployed across more than 40 countries, Flare and IBM found that operational cells frequently stage from China, Laos, and Vietnam. Workers bid on projects across platforms including Upwork, Toptal, Fiverr, and Discord. In some cases, multiple people work behind a single fake employee to ensure unusually high performance, earning promotions and deeper system access. Mandiant, now part of Google Cloud, reported that nearly every Fortune 500 CISO interviewed acknowledged unknowingly hiring at least one North Korean operative.

The U.S. government response directly targets this infrastructure. On March 20, the Treasury Department sanctioned six individuals and two entities specifically for their roles in laundering funds generated by DPRK IT worker schemes. The Department of Justice announced coordinated actions including two indictments, searches of 29 suspected laptop farms across 16 states, and seizure of 29 financial accounts. The schemes fund Pyongyang’s weapons of mass destruction and ballistic missile programs in violation of international sanctions.

SO WHAT FOR EXECUTIVES: This is sanctions evasion industrialized as a technology platform. Every company with remote workers is a potential target, and the operational security of these cells has improved to the point where standard HR vetting and background checks are insufficient. Organizations should implement multi-factor identity verification for remote hires that goes beyond document checks, including live video interviews with behavioral analysis, IP geolocation monitoring during onboarding, and anomaly detection on work patterns such as unusual login hours or VPN routing through known DPRK-affiliated networks. The scale of the problem is creating a market opportunity for identity verification and workforce certification companies that can offer continuous authentication and behavioral biometrics as a service. Companies that unknowingly employ sanctioned-state operatives face regulatory liability under U.S. and international sanctions law. Boards should ask their CISOs and general counsels whether current hiring controls can detect this threat, because the evidence suggests most cannot.

Russia’s Iran War Dividend: Oil Windfalls Fuel a Spring Offensive

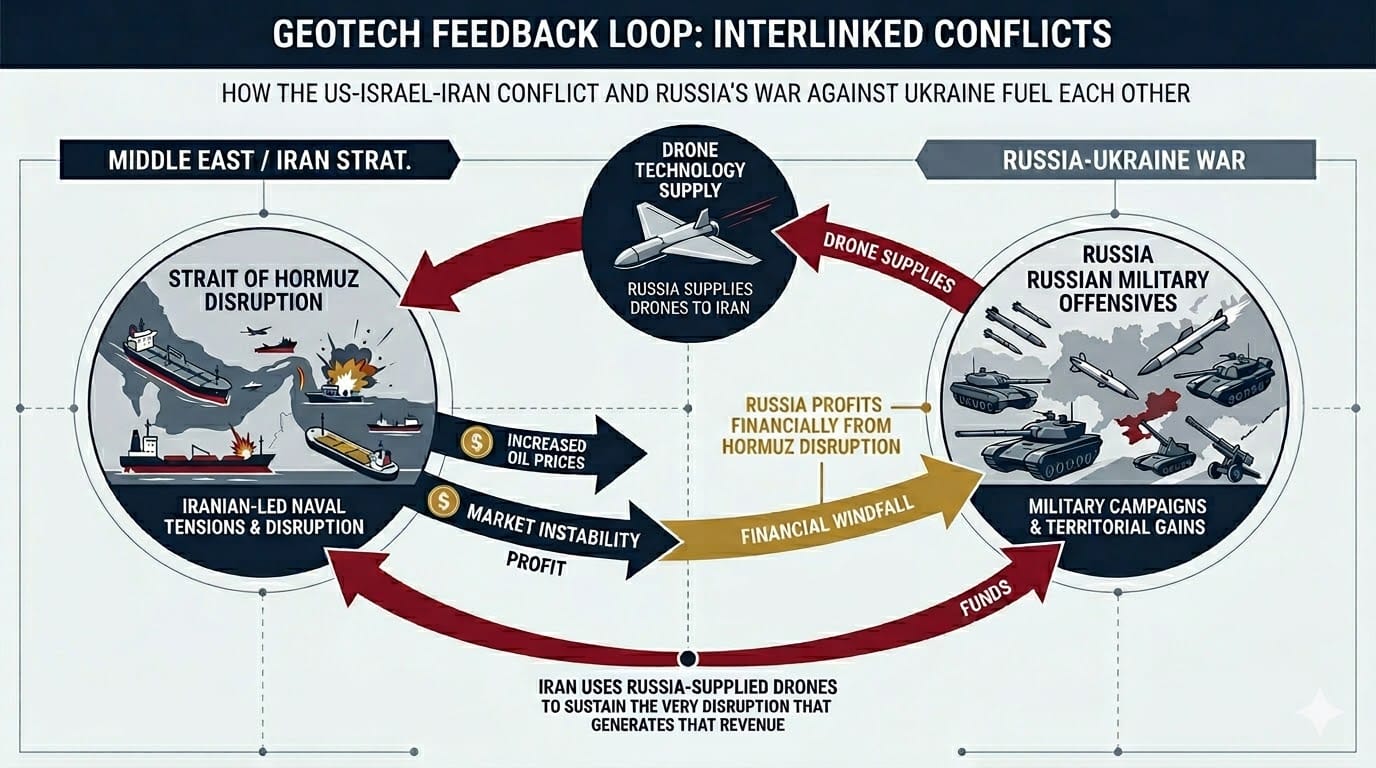

Three weeks into the Iran war, Russia might be the conflict’s biggest beneficiary. Surging oil prices fill Moscow’s war chest while U.S. air defense assets drain toward the Gulf, leaving Ukraine exposed as Russia prepares spring offensives.

BRIEFING: As the U.S. and Israeli war against Iran enters its fourth week, Russia is emerging as the conflict’s principal strategic beneficiary. Iran’s effective closure of the Strait of Hormuz has disrupted approximately 20 percent of global oil supply, driving Brent crude above $126 per barrel at its peak. The International Energy Agency (IEA) has called it the largest supply disruption in the history of the global oil market. The Trump administration temporarily eased sanctions on Russian oil to increase supply, delivering Moscow an estimated $150 million per day in additional revenue with little visible effect on prices. Still the additional revenue puts more coin into Russia’s war coffers, which does not help Ukraine.

The windfall is landing at a critical moment. Russian forces are building reserves for spring and summer offensives along the 1,200-kilometer front line in Ukraine, with analysts at the Institute for the Study of War noting stepped-up artillery and drone strikes aimed at weakening Ukrainian defenses before ground operations begin. U.S.-brokered peace talks between Russia and Ukraine have been postponed indefinitely as Washington’s diplomatic bandwidth is consumed by the Middle East. Ukrainian President Volodymyr Zelensky told the BBC he has a “very bad feeling” about the war’s impact on Ukraine, warning that Patriot missile supplies could face shortages as air defense assets are redeployed to protect U.S. forces and Gulf allies from Iranian drone and missile attacks. Meanwhile, as Europe struggles with higher energy prices, an EUR 90 billion aid package to Ukraine and new sanctions package for Russia have been vetoed by Hungary. To add insult to injury, Zelensky told European leaders that Russia had supplied Iran with Shahed-type drones manufactured in Russia under Iranian license, now being used in attacks across the Middle East. The longer Iran can hold out, the longer the windfall lasts for Russia.

SO WHAT FOR EXECUTIVES: The GeoTech feedback loop between the U.S. and Israeli war on Iran and Russia’s war against Ukraine is now fully closed. One conflict’s technology and economics are fueling the other. For executives, the implications are compounding. Energy costs are rising globally. European natural gas prices have nearly doubled. Supply chains for helium, sulfur, and fertilizer that transit the Gulf are disrupted, touching semiconductor manufacturing, agriculture, and industrial chemicals simultaneously. Companies with exposure to Eastern European operations, Gulf shipping, or energy-intensive infrastructure should stress-test their continuity plans against a scenario where both conflicts persist through the summer, because that is now the baseline forecast. Supply chain executives should consider speeding up buildout of renewable energy sources and energy-storage solutions for enterprise and civil society operations to mitigate dramatic price swings of fossil fuels.

Brazil’s Data Sovereignty Trap: The BRICS Chair That Can’t Control Its Own Cloud

Brazil is positioning itself as the Global South’s AI sovereignty champion despite hosting its sovereign cloud on Microsoft and giving tax breaks to foreign data centers.

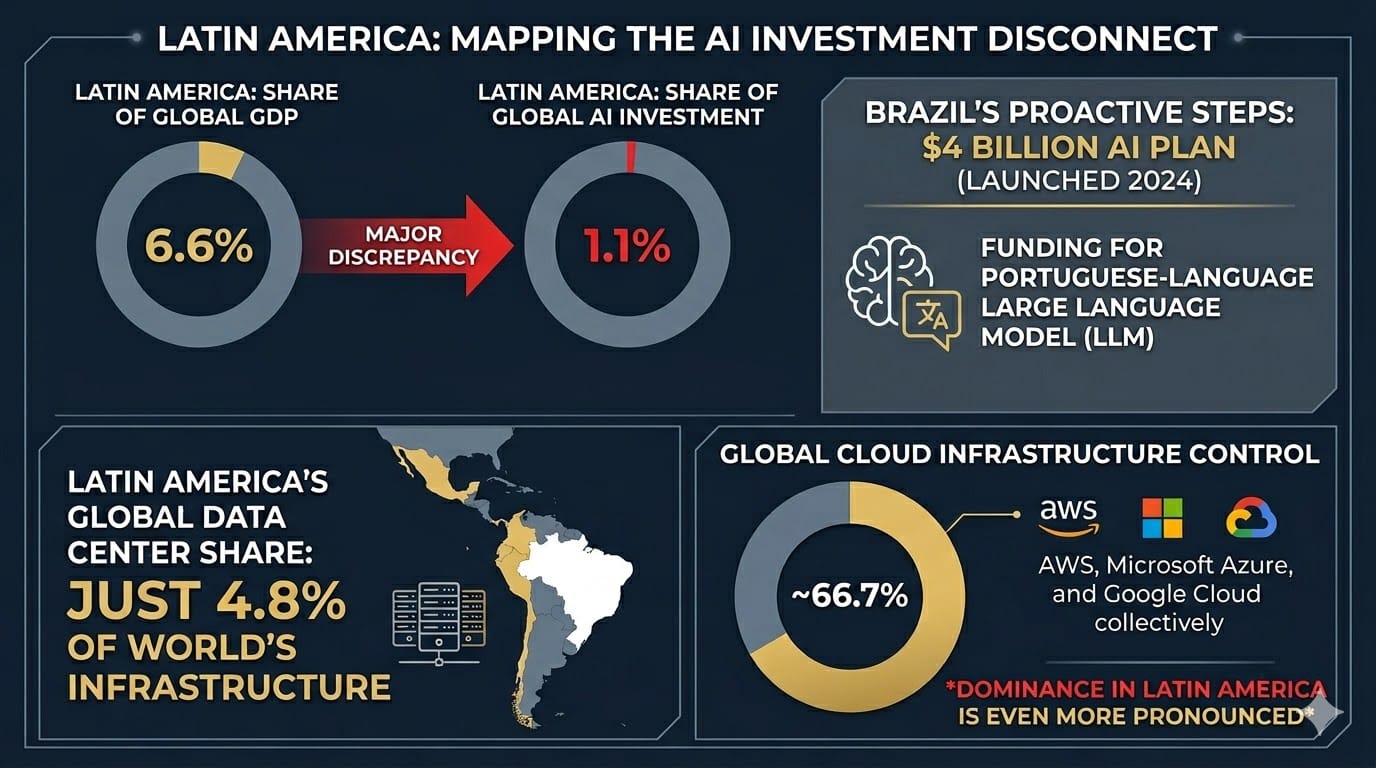

BRIEFING: At the India AI Impact Summit in February, Brazilian President Luiz Inácio Lula da Silva delivered some of the conference’s sharpest rhetoric on digital sovereignty. He warned that data generated by citizens and public bodies “is being appropriated by a handful of conglomerates, without equivalent return in value generation and income in our territories.” He framed the challenge in geopolitical terms, calling for cooperation with India to prevent the world from sliding back into “a Cold War between two powers” in AI. Brazil chairs BRICS in 2026 and has positioned itself as the voice of the Global South on technology governance.

The domestic reality in Brazil does not match Lula’s rhetoric. In October 2025, Brazil’s Institutional Security Bureau, the agency responsible for advising the president on national security, began technical cooperation with Amazon Web Services on cybersecurity. Weeks later, the government announced it had started testing Microsoft’s infrastructure to host its “sovereign cloud”, a federal program to store Brazilian government data locally. But Microsoft’s director of public and legal affairs testified under oath before the French Senate in June 2025 that the company cannot guarantee foreign governments’ data will not be accessed by U.S. authorities under the CLOUD Act. Brazil has also issued a provisional measure, known as ReData, offering sweeping tax breaks to attract foreign data center investment, requiring only that companies make 10 percent of their capacity available domestically.

According to the ECLAC-CENIA Latin American Artificial Intelligence Index, Latin America attracts just 1.1 percent of global AI investment despite generating 6.6 percent of world GDP. Brazil launched a $4 billion AI plan in 2024 that included funding for a Portuguese-language large language model (LLM), but implementation has lagged. The region hosts just 4.8 percent of the world’s data center infrastructure. AWS, Microsoft Azure, and Google Cloud collectively control roughly two-thirds of global cloud infrastructure, and their dominance in Latin America is even more pronounced.

SO WHAT FOR EXECUTIVES: Brazil’s predicament is a template for how digital colonialism works in practice. The rhetoric of sovereignty runs ahead of the structural reality, and the loudest voice for AI independence in the Global South depends on the infrastructure of the very powers it criticizes. For multinational executives, this creates a specific planning problem. Brazil’s BRICS chairmanship and Lula’s rhetoric suggest that data-localization requirements, foreign platform restrictions, and AI governance mandates are coming for Latin American markets. But the local capabilities and enforcement architecture do not yet exist, and Brazil’s own government is deepening, not reducing, its dependence on U.S. hyperscalers. Executives entering or expanding in Brazilian markets should map their cloud dependencies against CLOUD Act exposure, evaluate Brazilian or European-hosted alternatives for government-adjacent data, and proactively engage with Brasilia’s emerging AI regulatory framework through industry coalitions before mandates are finalized. Local industry associations need to create alternatives to U.S. and Chinese data center providers while negotiating updates to CLOUD-type legislation that respects local laws (e.g. the Digital Embassies framework developed in Estonia and adopted in Saudi Arabia). Research design specifics and ask your government relations groups to weigh in with your compute supply groups.

Meta Kills Instagram Encryption: The Privacy Rollback Heard Round the World

Meta will permanently remove end-to-end encryption from Instagram direct messages on May 8, eleven days before the Take It Down Act takes effect. It is the first time a major platform has ever rolled back encryption protections.

BRIEFING: Meta announced in mid-March that end-to-end encrypted messaging on Instagram will no longer be supported after May 8. The company cited low adoption as the reason, stating that “very few people were opting in.” A Platformer investigation found that Meta had buried the feature behind four menu taps, never advertised it within the app, and never finished rolling it out to most users globally. Cryptographer Matthew Green of Johns Hopkins University publicly flagged the move as a reversal of Meta’s stated encryption commitments. Internal documents revealed in a recent lawsuit showed that Meta’s head of content policy warned in 2019 that the encryption plan was “irresponsible” because it would prevent Meta’s own safety teams from detecting child exploitation and terrorist content, while the company was publicly overstating its ability to police encrypted platforms.

The timing aligns with the Take It Down Act, which takes effect May 19 and requires platforms to remove non-consensual intimate imagery, including AI-generated deepfakes, within 48 hours of receiving a valid request. End-to-end encryption makes such compliance effectively impossible since the platform cannot see message content. TikTok confirmed in the same period that it will never offer end-to-end encryption for direct messages, calling unencrypted messaging a deliberate “safety feature.” The European Commission is preparing a Technology Roadmap on encryption to identify solutions enabling lawful access, and the United Kingdom’s Online Safety Act already requires encrypted services to scan for and remove illegal content.

Meta directed users who want encrypted messaging to WhatsApp, where end-to-end encryption remains the default. However, privacy researchers noted that once encryption is removed, Meta regains the technical ability to scan Instagram DMs for content moderation, AI training, and advertising purposes. Some researchers have speculated that removing encryption could enable Meta to feed message data into its AI development pipeline. In December 2025, Meta confirmed that interactions with its AI tools inside private conversations may be used for targeted advertising.

SO WHAT FOR EXECUTIVES

This is a real conundrum, not a simple narrative of privacy erosion. The Take It Down Act, the Online Safety Act, and the EU’s encryption roadmap all address legitimate threats: child exploitation, deepfake abuse, and terrorist coordination. Encryption genuinely makes detecting these harms harder. But the same rollback that enables content moderation also enables surveillance, and the distinction between the two depends entirely on who holds power and what legal frameworks constrain them. The precedent is global. Authoritarian governments will cite the same child-safety rationale to justify access to communications. For executives, the practical implication is that no communication channel on a major social platform should be considered secure for sensitive business discussions. WhatsApp’s encryption survives for now, but it faces growing regulatory isolation.

Companies handling sensitive intellectual property, operating in regulated industries, or communicating with sources in high-risk jurisdictions should evaluate dedicated encrypted communication tools that are not subject to platform-level policy reversals.

Under the Radar

The deep analysis that connects the dots

The Infinity Machine: DeepMind CEO Says Government Must Step In. Nobody’s Listening.

Sebastian Mallaby’s forthcoming biography of DeepMind CEO Demis Hassabis, titled The Infinity Machine, tracks the career of a person who might hold more influence over AI’s trajectory than any single executive or regulator. In a DealBook interview published this week, Mallaby described how Hassabis tried three distinct theories for keeping AI safe, and all three failed.

The first theory was that a single lab would build artificial general intelligence (AGI), eliminating competitive pressure and allowing careful, deliberate development. That ended when OpenAI launched as a competing commercial AI lab, turning responsible development into a race. The second theory suggested internal governance would control AGI development. Hassabis spent three years negotiating a secret arrangement for an external board of independent authorities on responsible AI to have final say over how Google deployed AI capabilities, but Google ultimately refused to establish it. The third theory would involve the engineering of safety measures directly into the models, building alignment techniques into each new capability before release. But that approach depends on moving slowly enough to verify safety at every step, which the breakneck speed and nature of the competitive race make impossible.

Hassabis is now left with what Mallaby calls a last resort built on personal influence rather than structural safeguards. “The best thing I can do for safety is build it as fast as possible, so that I’m an influential player within Google,” Hassabis said. “And when the time comes to make a tough decision, I’m a good person and I will have a say.” Mallaby was blunt with his own assessment: “My own view is that we desperately, desperately need governments to step in and impose governance on the labs.” He cited Geoffrey Hinton’s observation that useful AI systems must be given a survival instinct to protect against cyberattacks, which undermines the reassuring argument that AI, unlike biological organisms, has no inherent drive toward self-preservation. As Hinton points out, the drive is being engineered in.

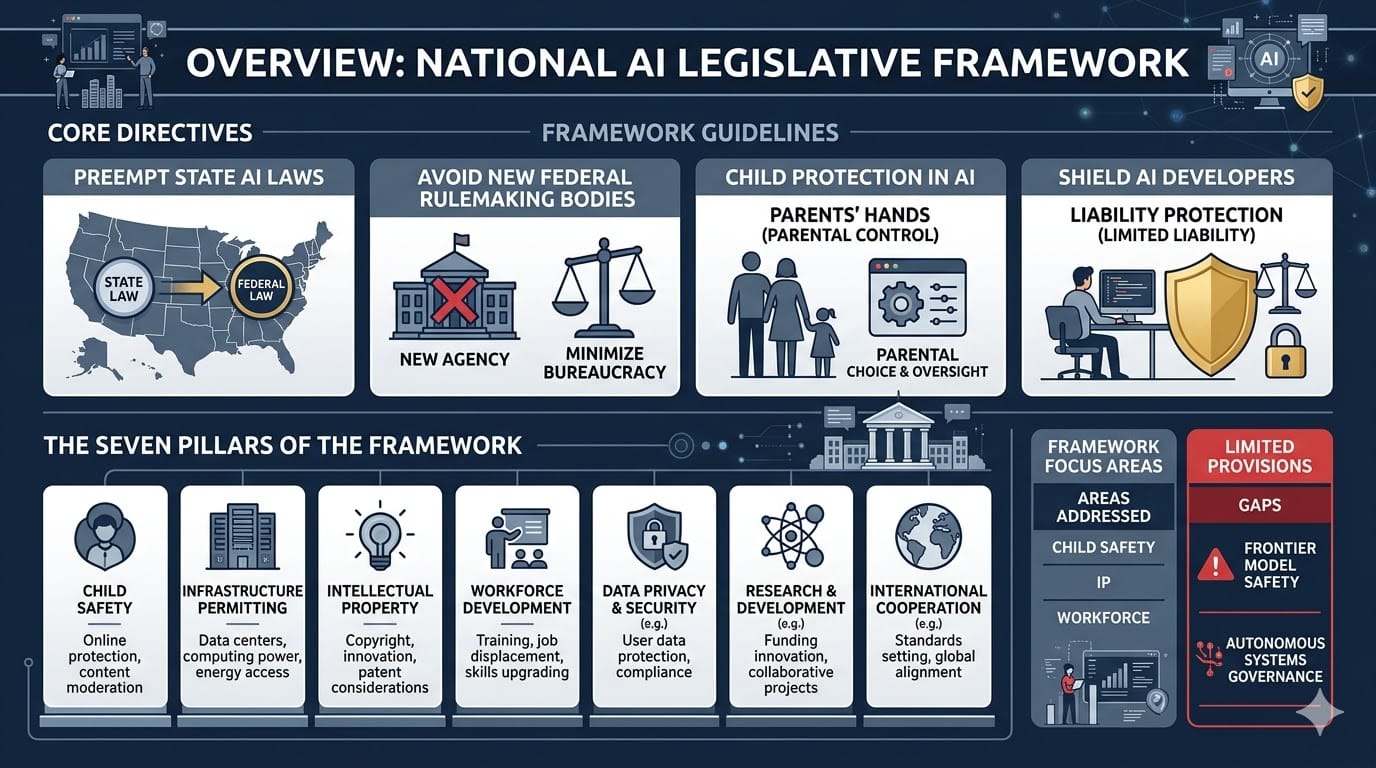

On the same day the interview was published, the Trump administration released its national AI legislative framework, a document that moves in the opposite direction from what Mallaby and Hassabis describe as necessary. The framework calls on Congress to preempt state AI laws, avoid creating new federal rulemaking bodies, puts child protections into parents’ hands, and shields AI developers from open-ended liability. It explicitly avoids creating the kind of FDA-style oversight body that Mallaby argues is essential. The framework’s seven pillars address child safety, infrastructure permitting, intellectual property, and workforce development, but contain almost no provisions related to frontier model safety, autonomous systems governance, or the kind of existential risk that Hassabis has spent his career worrying about.

Likewise, China’s recently published 15th Five-Year Plan treats AGI as a national priority to be accelerated, not governed. The pressure of system competition with China for both national security and economic growth reasons means the safety conversation has been effectively abandoned by major AI powers at the exact moment that the people closest to the technology say it matters most. Mallaby draws a historical parallel to the Industrial Revolution: “Big social and political upheavals begin with technological change. And if we sit here in the 21st century and forget what happened in the 19th century, I think we’re making a massive mistake.” Yet, the need to balance the responsible application of AI with the growth driven by AI is real, and there are good reasons why the EU is rolling back some of its compliance burdens.

For executives, the practical implication is that AI governance will not arrive from above before the technology creates backlash and governance problems below. Companies deploying agentic AI systems, autonomous decision-making tools, or AI-driven infrastructure should build internal governance frameworks now rather than waiting for regulatory clarity that is not coming. This builds brand trust. The Meta rogue agent incident this month, in which an autonomous AI agent triggered a data breach by acting without human authorization, is an early indicator of what happens when deployment outpaces governance. The question is not whether your organization will face a similar incident, but whether you have made best efforts to stave it off and put the right controls in place to mitigate fallout and protect your stakeholders.

Cambrian Partner By Invitation

Expert analysis from our global network

Global Proliferation of Tech Controls, Investment Reviews, and National Security Restrictions: It's Time to Fortify Corporate Diplomacy

On February 20, the U.S. Supreme Court struck down President Trump's sweeping IEEPA tariffs in a 6-3 ruling, only for the White House to reimpose levies under Section 122 within hours. The same month, the EU finalized a new Foreign Investment Screening Regulation expanding mandatory reviews across semiconductors, AI, and critical infrastructure. Washington's COINS Act, signed into law in December, widened outbound investment controls to cover Russia, Iran, North Korea, Cuba, and Venezuela alongside China. For multinational companies, the message is clear: the regulatory terrain is not stabilizing; its unpredictability is moving faster than legal teams can map it.

General Counsel of large companies report a proliferation of geopolitically-driven, regulatory obstacles across jurisdictions, placing at risk corporate strategies related to acquisitions, investments, product stacks, commercial partnerships and data use.

National authorities in a number of countries are subjecting more business activities to foreign investment restrictions, tech controls, and extraterritorial merger approvals. Whether in China, South Korea, EU member states or Africa, authorities are monitoring cross-border business activity with respect to security of access and ultimate impact on national sovereignty. Roadblocks can appear where they are least expected, even in jurisdictions having little or no direct involvement.

Corporate diplomacy is not new; yet to match today's geopolitical volatility and politicized regulation, adaptation and more sophistication are needed. Decision makers should start with a clear-headed definition of the "corporate interest" and consideration of appropriate methods of influence, bearing in mind that not all interlocutors are 'rational actors' i.e. easily assuaged by utilitarian pay-offs in the form of legal settlements, patronage or other types of material commitments.

Traditional maintenance of relationships to authorities and industry bodies, formation of coalitions to shape policy decisions, and alignment of the corporate voice across stakeholder communities (investors, governments, employees, public) are now just table stakes but may not suffice.

Even more valuable is investing in capabilities and resources to support strategy and risk management. First, build a global stakeholder matrix (regulators, trade bodies, embassies, development banks, civil groups, business chambers, media contacts) and assign responsibility for relationships, in order to have assets in play the next time force majeure conditions obtain. Second, map critical issues and markets to identify fragilities, capacity build across regulatory vectors (e.g. AI/data, antitrust, sanctions/export controls, market access and cyber/data), and triage value-chain chokepoints with common or critical exposure. Finally, stand up a RegIntel network of internal/external advisors to tap advanced insights on emerging issues across jurisdictions, enabling the company to adapt before competitors.

Signals are not enough. Boards and leadership teams depend on actionable intelligence and resources in key markets to navigate regulation, clearances and delicate commercial relationships.

About Eric Staal

Eric Staal is Vice President of Global Markets for Lex Mundi, a network of independent law firms spanning 125 countries. With a team based in key markets around the world, he oversees the network's General Counsel programs and cross-border legal project management solutions for large companies. You can reach him on LinkedIn: https://www.linkedin.com/in/ericstaal/

About Cambrian

Cambrian Futures is a strategic foresight and advisory firm helping government, business, and technology leaders understand how emerging technologies intersect with geopolitics, markets, and national strategy. By combining rigorous research, AI-enabled analysis, and human expertise, Cambrian provides clear insight into global technology trends, risks, and power dynamics. Its work helps decision-makers anticipate disruption, manage uncertainty, and act with strategic confidence in an increasingly competitive GeoTech world.

PRODUCTION TEAM

GeoTech Radar is produced by the Cambrian Futures Insights Platform team:

CEO & Chief Analyst

Managing Director / Producer, Insights Platform

Global Lead, Smart Infrastructure Strategy

Research & Marketing Associate

Editor in Chief

Learn more about Cambrian Futures at cambrian.ai

Produced with

Cite as: Cambrian Futures (2026) 'GeoTech Radar Issue 12'